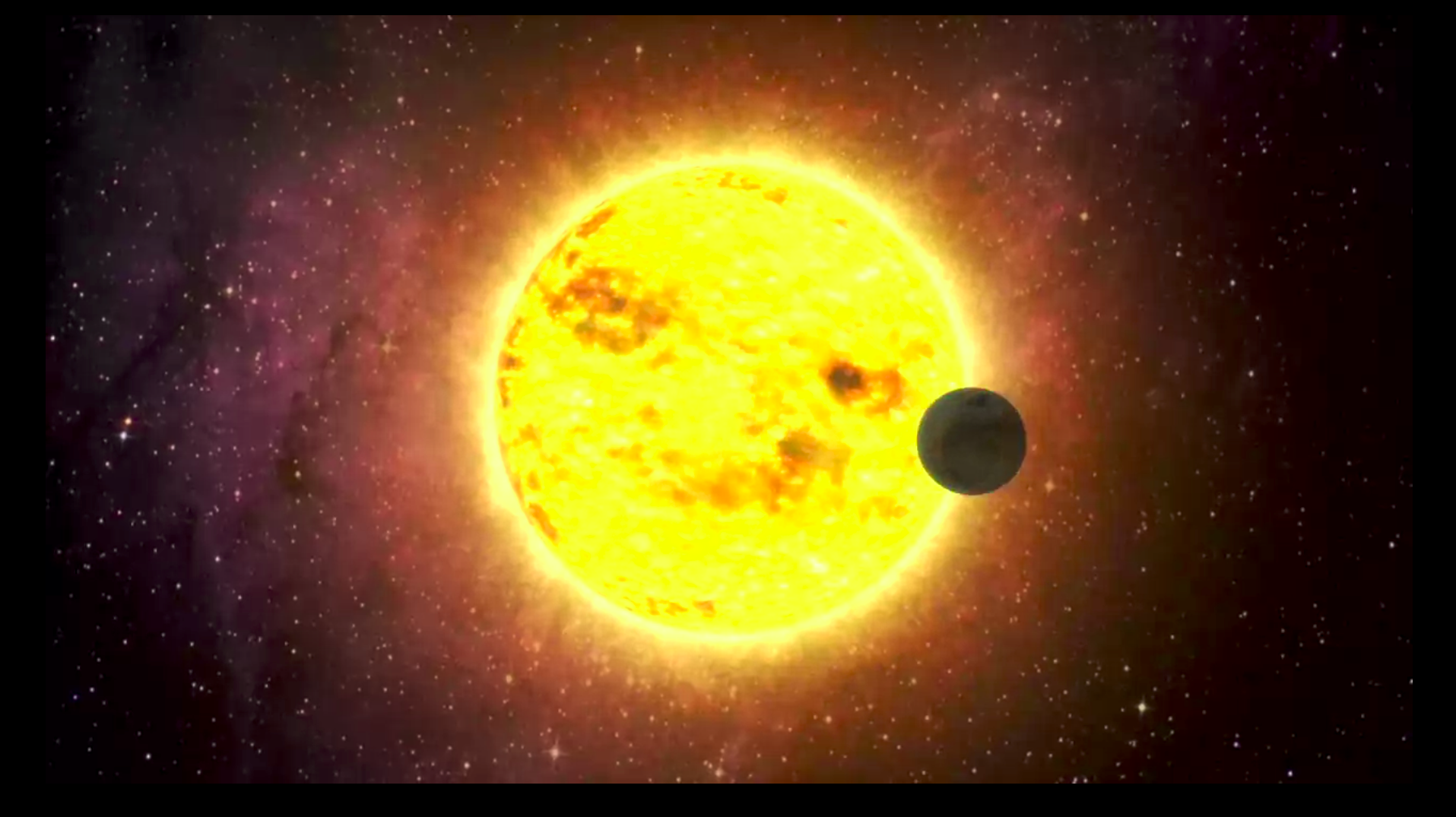

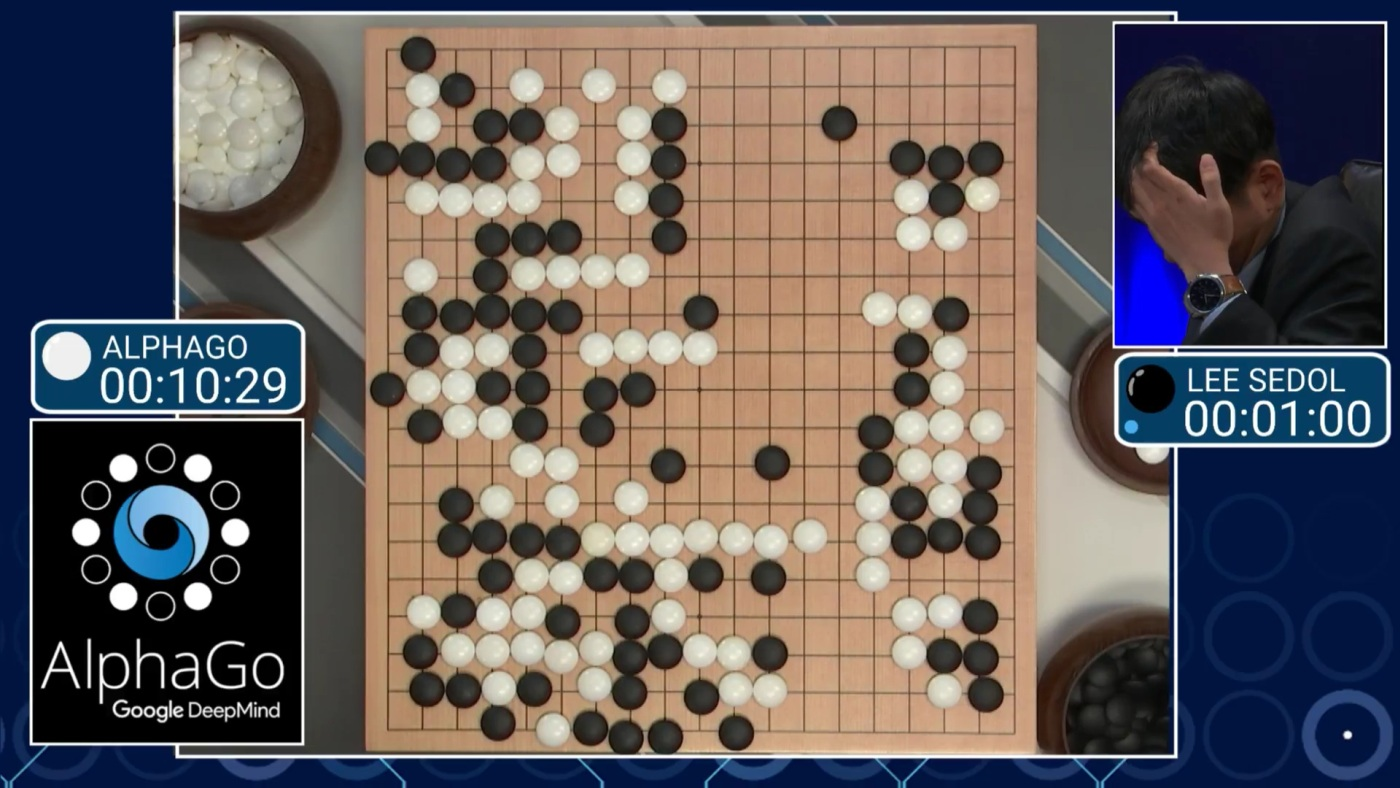

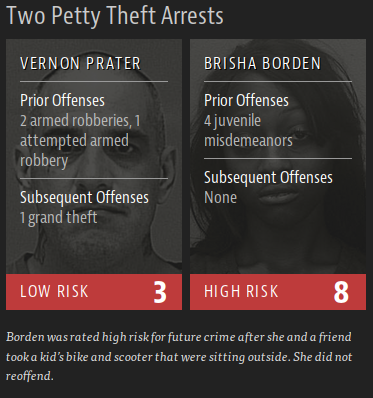

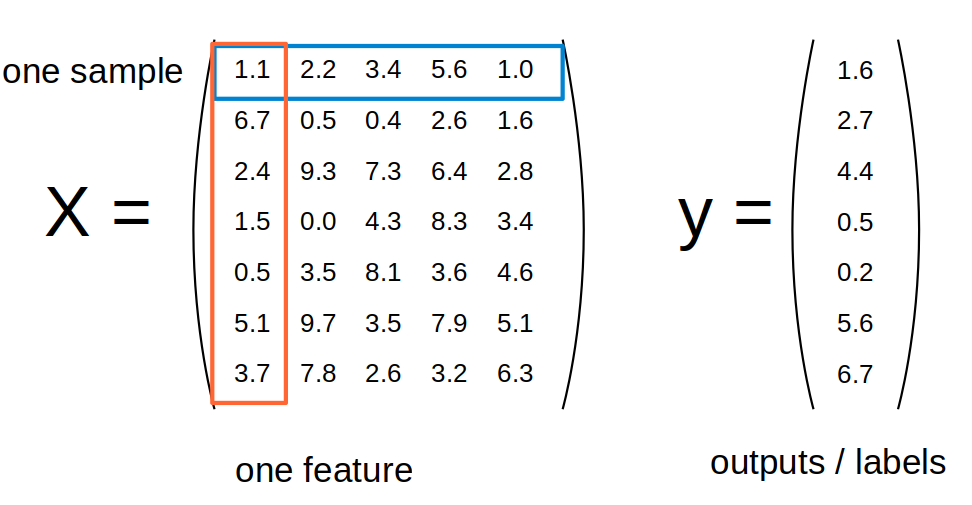

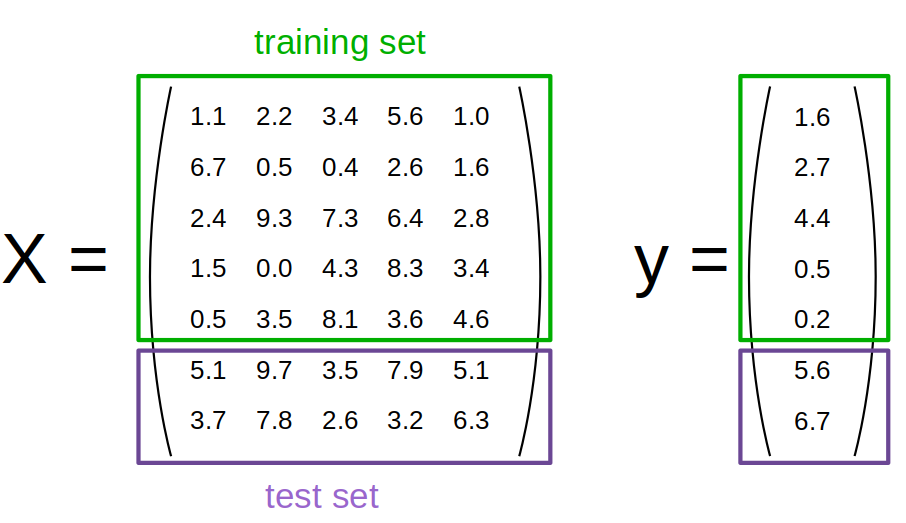

class: center, middle  ### Introduction to Machine learning with scikit-learn # Introduction Andreas C. Müller Columbia University, scikit-learn .smaller[https://github.com/amueller/ml-training-intro] --- # Other Resources .center[    ] Lecture: http://www.cs.columbia.edu/~amueller/comsw4995s18/schedule/ Videos and more slides! ??? FIXME JAKE book border There are three books that I recommend looking into for this course. Definitely check out my book, Introduction to machine learning with Python. You can find the PDF on courseworks. My book should be a relatively easy read and it’s quite short. The second one is Applied predictive modelling by Max Kuhn, which goes a bit deeper. This is about the level I want to go to in this course. You can get it for free at springer link, I posted a link in courseworks. These two are really the essential ones. Finally, there's Elements of statistical learning, also known as ESL or the stanford book, by Hastie Tibshibani and Friedman is a classic for a more theoretical view. You can get it for free on the authors website. If you want to brush up on your Python skills, I also recommend the Python Data Science Handbook by my friend Jake Vanderplas. --- class: center, middle # What and Why of Machine Learning ??? I first want to talk about what is machine learning, and why do we want it. As you’re in this course, you’re probably already somewhat convinced that it’s useful, but I briefly want to give my own perspective. In general, today will not be very meaty and be more a loose collections of ideas and directions. The next class we will go down to the metal much more. --- class: center, middle # What is machine learning? ??? Machine learning is about extracting knowledge from data. It is closely related to statistics and optimization. What distinguishes machine learning is that it is very focused on prediction. We want to learn from a large dataset how to make decisions for future observations. You could say that the input to a machine learning program is the dataset, and the output is a program that can make decisions on future observations. Machine learning is really widely used now, and I want to give you some examples that most of you probably already interacted with today. --- # Science! .center[  ] ??? That was some of the flashy, every day live applications. Something that might get you VC funding. There’s also a lot of machine learning applications in less visible, but equally important - or more important - applications in science. There is more and more personalized cancer treatment – via machine learning. More medical diagnosis, and more drug discovery are using machine learning. The higgs boson couldn’t have been found without machine learning, and the same is true for many earth like planets in other solar systems. Which is shown using an artists illustration here. In reality you would have a single pixel, containing the sun and the planet. You can find exoplanets by checking whether the star gets periodically slightly darker, in which case you found a planet. Of course with machine learning! Machine learning is an essential in many data driven sciences now! So no matter where you want to go with data, you need machine learning. But what does that mean? Next, I want to give you a little taxonomy of machine learning methods. --- class: center, middle # Types of Machine Learning ??? There are three main branches of machine learning. Who can name them? --- # Types of Machine Learning .padding-top[ - ## Supervised - ## Unsupervised - ## Reinforcement ] ??? They called supervised learning, unsupervised learning and reinforcement learning. What are they? This course will heavily focus on supervised learning, but you should be aware the other types and their characteristics. We will do some unsupervised learning, but no reinforcement learning. Supervised learning is the most commonly used type in industry and research right now, though reinforcement learning becomes increasingly important. --- class: center # Supervised Learning .larger[ $$ (x_i, y_i) \propto p(x, y) \text{ i.i.d.}$$ $$ x_i \in \mathbb{R}^p$$ $$ y_i \in \mathbb{R}$$ $$f(x_i) \approx y_i$$ ] ??? In supervised learning, the dataset we learn form is input-output pairs (x_i, y_i), where x_i is some n_dimensional input, or feature vector, and y_i is the desired output we want to learn. Generally, we assume these samples are drawn from some unknown joint distribution p(x, y). In statistics, x_i might be called independent variables and y_i dependent variable. What does iid mean? We say they are drawn iid, which stands for independent identically distributed. In other words, the x_i, y_i pairs are independent and all come from the same distribution p. You can think of this as there being some process that goes from x_i to y_i, but that we don’t know. We write this as a probability distribution and not as a function since even if there is a real process creating y from x, this process might not be deterministic. The goal is to learn a function f so that for new inputs x for which we don’t observe y, f(x) is close to y. This approach is very similar to function approximation. The name supervised comes from the fact that during learning, a supervisor gives you the correct answers y_i. --- # Examples of Supervised Learning ??? Here are some examples of supervised learning. Given an array of test results from a patient, does this patient have diabetes? The x_i would be the different test results, and y_i would be diabetes or no diabetes. Given a piece of a satellite image, what is the terrain in this image? Here x_i would be the pixels of the image, and y_i would be the terrain types. This is often used to automate manual labor. For example, you might annotate part of a dataset manually, then learn a machine learning model from this annotations, and use the model to annotate the rest of your data. --- # Unsupervised Learning .padding-top[ $$ x_i \propto p(x) \text{ i.i.d.}$$ Learn about $p$. ] ??? In unsupervised machine learning, we are just given data points x_i, that are assumed to be drawn from an unknown distribution. Usually we want to learn something about these, such as whether they lie on a low-dimensional subspace, or whether the data clusters in several groups, or find ways to represent the distribution compactly. The goal in unsupervised learning is often much less clear than in supervised learning, and there is no-one providing a “correct” answers and no supervisor. Common examples of unsupervised learning is discovering topics in news articles or on twitter, or grouping data into clusters for easier analysis. Another one is outlier detection, where you ask “does this data look normal” which is important for fraud detection and security systems. --- class: center, middle # Reinforcement Learning .left-column[  ] .right-column[  ] ??? The third kind, reinforcement learning, has been in the news quite a bit in the last year. Has anyone heard of that? Alpha go beat the world champion in go. Reinforcement learning is about an agent learning to interact with an environment, with some ultimate goal. The environment could be a go board, and the goal to win the game. For self-driving cars, the the environment could be roads, sensed by cameras and laser sensors, and the goal would be to get you somewhere quickly and safely. Or, the environment could be a social media platform, and the goal could be to provide you such great content that you never remove your eyes from your phone again! --- # Other kinds of learning - Semi-supervised - Active Learning - Forecasting - ... ??? There are other kinds of learning that are somewhere between the three kinds I just explained. Semi-supervised learning for example is a combination of supervised and unsupervised learning. Active learning is somewhere between reinforcement learning and supervised learning. There are also many kinds of supervised learning where the assumption that data points are independent is dropped, for example for time series analysis and forecasting. However, if you get the three main concepts, the rest will be easy to understand. Some people, including the local and famous Yann LeCun think that supervised learning is fundamentally limited. In particular it doesn’t seem to be how humans learn. So now you can buy these shirts on redbubble --- # Classification and Regression .left-column[ ### Classification - target y discrete - Is this patient sick? ] .right-column[ ### Regression - target y continuous - How long will it take for the patient to recover? ] ??? So getting back to supervised learning, there are two basic kinds, called classification and regression. The difference is quite simple: if y is continuous, then it’s regression, and if y is discrete, it’s classification. While it's simple, let me give an example. If I want to predict whether a one of you will pass the class, it’s a classification problem. There are two possible answers, “yes” and “no”. If I want to predict how many points you get on an exam, it’s a regression problem, there is a continuous, gradual output space. There are generalizations of this where we try to predict more than one variable, but we won’t go into that in this course. The main reason the distinction between classification and regression is important is because the way we measure how good a prediction is is very different for the two. It's not always entirely clear whether it's best to formulate a problem as classification or regression. If you think of predicting a 5-star rating, there's only 5 different possible outcomes, so you might think it's classification. But there is also an obvious ordering between the outcomes, which would make it a regression problem. Both formulations could work, and there are approaches that combine the two for this particular problem. --- # Generalization .left-column[ Not only <br /> also for new data: ] .right-column[$f(x_i) \approx y_i$,<br /> $f(x) \approx y$ ] ??? For both regression and classification, it’s important to keep in mind the concept of generalization. Let’s say we have a regression task. We have features, that is data vectors x_i and targets y_i drawn from a joint distribution. We now want to learn a function f, such that f(x) is approximately y, not on this training data, but on new data drawn from this distribution. This is what’s called generalization, and this is a core distinction to function approximation. In principle we don’t care about how well we do on x_i, we only care how well we do on new samples from the distribution. We’ll go into much more detail about generalization in about a week, when we dive into supervised learning. --- # Relationship to Statistics .left-column[ Statistics - model first - infence emphasis ] .right-column[ Machine learning - data first - prediction emphasis ] ??? Before I’ll go into some general principles, I want to position machine learning in relation to statistics. I recently got chewed out by a colleague for doing that. My goal here is not to say one is better than the other. Actually, there’s really no clear boundary between statistics and machine learning, and anyone that tells you otherwise is lying. Two of the books I recommended for the course are actually statistics text books. But I can tell you how the tools that I’m talking about in this course will differ from what you’d learn in a typical stats course. Statistics is usually about inference, often phrased in terms of hypothesis testing. An example might be a yes-no-question, such as “are women less likely to enroll in a Data Science Program”, and you have a sample population, for example this classroom, and you can then try to make an inference about whether this statement is true. Often this includes making assumptions on how your sample relates to the general population, say this class vs all of DSI or Columbia vs all of the US. You might also have a specific model of how the process behind your question works. --- # Relationship to Statistics .left-column[ Statistics - model first - infence emphasis ] .right-column[ Machine learning - data first - prediction emphasis ] ??? On the other hand, machine learning is about prediction and generalization. We want to learn from past data to predict outcomes on future, unseen data. We usually want to make statements about individual data points, and we want to build a model that will work on new data that fulfills our assumptions, independent of the population we samples. Often we don’t have or need a model of the process, but we rely on the assumption that our training data is generated from the same process as any future data will be. There are statisticians that do predictions and there are machine learning scientists that do inference, but I find this distinction helpful. Again I’m not saying one or the other is better, I’m just saying that you should know what kind of problem you are trying to solve, and what the right tool for the problem is. And then you can call it machine learning or statistics or probabilistic inference or data science. The tools you learn in this class will usually not help you to make yes/no inferences, and they will only give you a limited insight into the data generating process. --- class: middle # Goal considerations ??? One of the most important parts of machine learning is defining the goal, and defining a way to measure that goal. In this way, Kaggle is a really bad way to prepare you for machine learning in the real world, because they already did that work for you. In the real world, people don’t tell you whether you should use unsupervised learning, supervised learning, classification or regression, and what’s the right way to cast something as a machine learning task – or whether to cast it as machine learning at all. --- class: middle, center # Sidebar: Ethical Considerations <br/> .compact[https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing] ??? There is another area where explainability and transparency matter, and that is when people’s lives are at stake. One aspect of machine learning that only recently is getting some more attention is ethics. There was a recent article in propublica about racial bias in risk-assessment used in the criminal justice system. Spoiler alert: it’s bad. I recommend reading the article, it’s quite interesting. This is a black-box machine learning system created by some company. If they had to provide explanations, or a more transparent system, the situation would likely be better. But this is not the only place where ethics plays a role in machine learning. There will be a more focused course on ethics in the DSI next semester, and I really recommend looking into it. --- class: center # scikit-learn documentation  --- class: center # Representing Data  --- class: center # Training and Test Data  --- class: center, middle # Notebook: Data Loading ---