Gäel Varoquaux, Lars Buitinck, Gilles Louppe, Olivier Grisel, Fabian Pedregosa, and Andreas C. Müller:

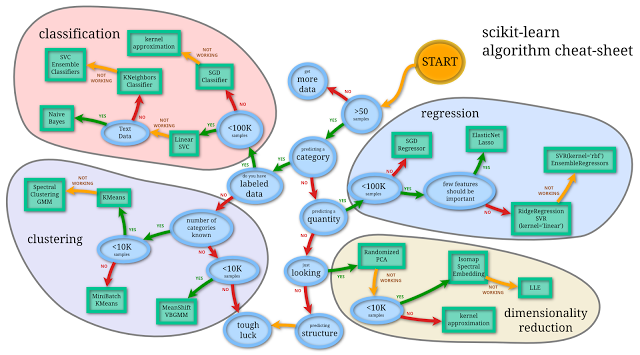

Scikit-learn: Machine Learning Without Learning the Machinery

GetMobile: Mobile Computing and Communications, 2015.

Alexandre Abraham, Fabian Pedregosa, Michael Eickenberg, Philippe Gervais, Andreas C. Müller, Jean Kossaifi, Alexandre Gramfort, Bertrand Thirion, Gäel Varoquaux:

Machine learning for neuroimaging with scikit-learn

Frontiers in Neuroinformatics, 2014.

Andreas C. Müller:

Methods for Learning Structured Prediction in Semantic Segmentation of Natural Images

PhD Thesis. Published 2014.

Andreas C. Müller and Sven Behnke:

PyStruct - Learning Structured Prediction in Python

Journal of Machine Learning Research (JMLR), 2014.

Andreas C. Müller and Sven Behnke:

Learning Depth-Sensitive Conditional Random Fields for Semantic Segmentation of RGB-D Images

In Proceedings of IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, May 2014.

Lars Buitinck, Gilles Louppe, Mathieu Blondel, Fabian Pedregosa, Andreas C. Müller, Olivier Grisel, Vlad Niculae, Peter Prettenhofer, Alexandre Gramfort, Jaques Grobler, Robert Layton, Jake Vanderplas, Arnaud Joly, Brian Holt, Gaël Varoquaux:

API design for machine learning software: experiences from the scikit-learn project

ECML PKDD 2013 Workshop on Languages for Data Mining and Machine Learning.

Andreas Müller and Sven Behnke:

Learning a Loopy Model For Semantic Segmentation Exactly

In Proceedings of 9th International Conference on Computer Vision Theory and Applications (VISAPP), Lisbon, January 2014.

Andreas Müller, Sebastian Nowozin and Christoph H. Lampert:

Information Theoretic Clustering using Minimum Spanning Trees

DAGM-OAGM, 2012.

Andreas Müller and Sven Behnke:

Multi-Instance

Methods for Partially Supervised Image Segmentation

First IAPR Workshop on Partially Supervised Learning

(PSL), Ulm 2011.

Hannes Schulz, Andreas Müller, and Sven Behnke:

Exploiting Local Structure in Boltzmann Machines

Neurocomputing 74(9):1411-1417, Elsevier, April 2011.

Hannes Schulz, Andreas Müller, and Sven Behnke:

Investigating Convergence of Restricted Boltzmann Machine Learning

NIPS 2010 Workshop on Deep Learning and Unsupervised Feature Learning Whistler, Canada, December 2010

Dominik Scherer, Andreas Müller, and Sven Behnke:

Evaluation of Pooling

Operations in Convolutional Architectures for Object Recognition

20th International Conference on Artificial Neural

Networks (ICANN), Thessaloniki, Greece, September 2010.

Andreas Müller, Hannes Schulz, and Sven Behnke:

Topological Features in Locally Connected RBMs

in the International Joint Conference on Neural Networks (IJCNN 2010)

Hannes Schulz, Andreas Müller, and Sven Behnke:

Exploiting local structure in stacked Boltzmann machines

in European Symposium on Artificial Neural Networks,

Computational Intelligence and Machine Learning (ESANN), Bruges, Belgium